The next version of ChatGPT may just be called “Goblin.”

This is because in a post on X, OpenAI CEO Sam Altman wrote: “What if we name the next model ‘goblin’, almost worth it to make you all happy…”

The final name would depend on how serious the CEO was in this tweet, but there is a whole story behind the ChatGPT goblin situation.

what if we name the next model "goblin"

almost worth it to make you all happy…

— Sam Altman (@sama) May 10, 2026

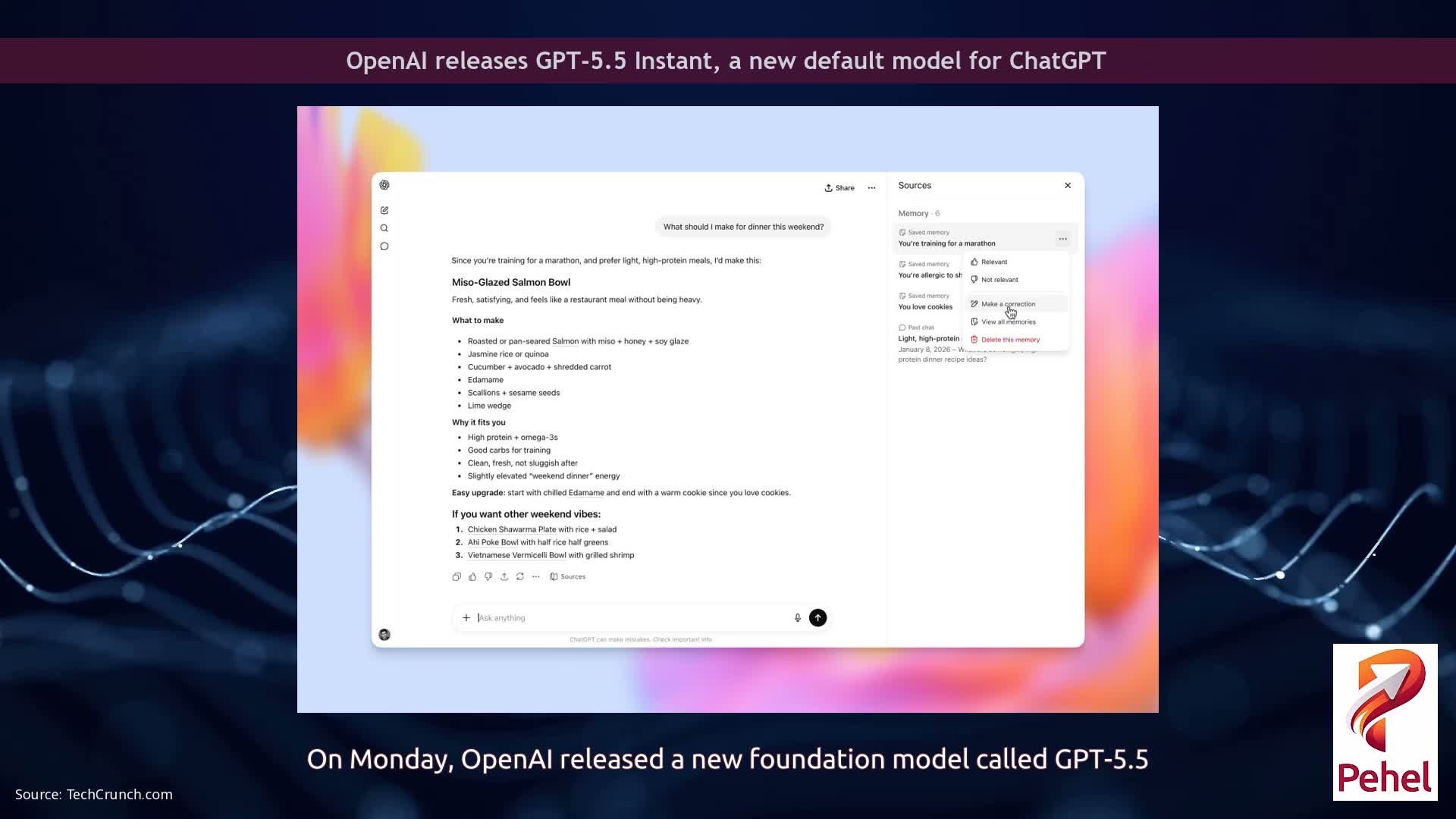

Following the release of GPT-5.5 last week, users noticed a system prompt instructing the model to avoid mentioning “goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures” unless directly relevant to the user’s request.

The discovery led OpenAI to publish a blog post explaining the source of the behavior.

According to the company , researchers began noticing a massive increase in references to goblins and gremlins after the launch of GPT-5.1 last November.

OpenAI said usage of the word “goblin” increased by 175%, while “gremlin” mentions rose by 52% during that period.

This is an actual line that was added to the official system prompt for Codex for GPT-5.5 by OpenAI. Usually the system prompt is as minimal as possible, so I assume it would otherwise mention goblins a lot.AIs are weird.

— Ethan Mollick (@emollick.bsky.social) 2026-04-28T06:14:22.988Z

The company traced the behavior back to ChatGPT’s optional “nerdy” personality setting, which was available before March this year.

Part of the personality prompt encouraged the chatbot to acknowledge and enjoy the world’s “strangeness” while discussing serious subjects without becoming overly serious.

OpenAI said the nerdy personality generated 66.7% of all goblin mentions despite accounting for only 2.5% of total ChatGPT responses.

The company found that reinforcement learning rewarded outputs containing words such as “goblin” and “gremlin,” causing the chatbot to increasingly favor creature-related language.

OpenAI added that behaviors learned under one personality setting can spread into other areas of a model during later stages of reinforcement learning and fine-tuning.

OpenAI said GPT-5.5 training had already started before the company fully identified the cause of the issue, which is why Codex included instructions limiting creature references.

The company also said the investigation helped it develop additional tools for auditing and correcting unintended model behavior.

📢 For the latest Tech & Telecom news, videos and analysis join ProPakistani's WhatsApp Group now!

Follow ProPakistani on Google News & scroll through your favourite content faster!

Shares